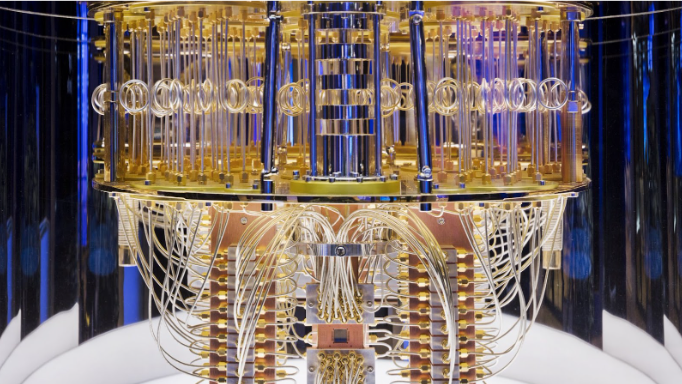

Quantum computing is emerging as a radical shift in how we process information. Rather than the familiar bits of 0 or 1 that power today’s computers, quantum computing uses the weird but powerful laws of quantum mechanics — superposition, entanglement and interference. A quantum bit, or qubit, can exist in multiple states simultaneously (for example, ‘0’ and ‘1’ at once). When multiple qubits become entangled, their states become linked in ways that defy classical explanation. By steering quantum states via interference, quantum computers can explore many computational paths in parallel.

What does that buy us? For certain types of problems, this means a quantum computer may reach a solution dramatically faster than any classical computer. That said, quantum computing isn’t simply “better laptops” or “faster iPhones” — it’s a fundamentally different architecture, and one with serious engineering challenges. You can read a broad overview at Wikipedia’s “Quantum computing” page.

The Key Differences from Classical Computing

- Superposition: A classical bit is either 0 or 1. A qubit can be in a blend of both.

- Entanglement: Two or more qubits can be connected so that the state of one instantly relates to the state of another, even when separated.

- Interference: Quantum amplitudes can add or cancel out, enabling algorithms to steer toward correct answers and away from wrong ones.

These capabilities open the door to quantum algorithms like Shor’s algorithm (for factoring large numbers) and Grover’s algorithm (for unstructured search) — each offering theoretical performance gains over classical methods. But it’s important to remember: quantum computers aren’t generic “faster computers” for everything — they’re promising for specific domains where classical methods hit exponential barriers.

Plain-English Explanation of Quantum Error Correction

One of the biggest obstacles in building useful quantum computers is error. Qubits are extremely fragile: tiny disturbances (temperature fluctuations, electromagnetic noise, vibration) can flip their states or destroy coherence. Without protection, the computation collapses into junk.

Quantum error correction (QEC) is the technique that allows us to overcome this fragility and build logical qubits out of many physical qubits so that the system can detect and correct errors — much like how classical computers use parity bits or ECC memory. Here’s how to think of it in plain English:

- Imagine you have a valuable painting and you want to protect it from damage. Instead of storing just one copy, you make many copies of it, each stored in a separate locked vault. If one vault gets flooded, you still have other copies safe.

- In quantum terms, you encode a logical qubit using several physical qubits. Each physical qubit on its own is prone to error, but when you monitor them together, you can detect when something has gone wrong without looking directly at the quantum information (which would collapse the superposition).

- The system uses redundancy and checks (quantum syndrome measurements) to figure out “which physical qubit flipped or drifted” and then apply a corrective operation.

- However, unlike classical bits, you cannot simply “copy” unknown quantum states (that’s ruled out by the No‑cloning theorem). So quantum error correction uses indirect measurements (syndromes) and smart encodings (like the surface code, Steane code, etc.).

- Crucially, the error rate of each physical qubit/gate must be below a certain threshold. Once you’re under the threshold, you can scale many physical qubits to create a logical qubit that remains stable for longer (the so-called “fault-tolerance” regime).

- In short: Quantum error correction turns many unreliable parts into one reliable system. Without it, quantum computers cannot scale to the large problem sizes that might outperform classical computers.

Researchers emphasise that getting error-rates low enough and building error-corrected logical qubits remains one of the dominant technical challenges in the field (see an older critique from IEEE Spectrum).

Future Applications: Where Quantum Might Make the Big Difference

Here are some of the most promising application areas for quantum computing:

- Chemistry & Materials Science — Quantum systems are naturally suited to simulating other quantum systems. That means we could simulate molecules, catalysts, battery materials or superconductors far more accurately than classical computers allow.

- Optimization Problems — Logistics, supply chain, finance or portfolio allocation problems often involve enormous search spaces. Quantum or hybrid quantum-classical methods may find better solutions faster.

- Machine Learning & AI — Quantum-enhanced models or quantum sampling might augment or accelerate some parts of machine learning: either by training new architectures or offering different data-generation/sampling capabilities.

- Cryptography & Secure Communications — On one hand, quantum computing threatens current public-key cryptography (for instance by factoring large numbers). On the other, it enables new paradigms such as quantum key distribution (QKD) and post-quantum cryptography.

- Sensing & Timing — While strictly not “computation,” quantum devices are also promising for ultra-precise sensing, navigation, clocks and measurement-applications (which have indirect computing/system implications).

These are not speculative far-off ideas: many companies and research labs are actively working toward them, even if practical, large-scale deployments are some years away.

Recent Developments (2024–2025 Highlights)

Here are a few of the major recent moves in the quantum computing space:

- Google — Willow chip & algorithm milestone

Google announced a ~105-qubit “Willow” chip and a new algorithm reported as “Quantum Echo” that achieved verifiable speed-ups for a quantum simulation task. This represents a step from “quantum supremacy” (just beating a classical computer) to application-oriented quantum advantage. - IBM — Roadmap for fault-tolerant systems

IBM has published its quantum roadmap, investing in higher connectivity processors, integration with high-performance classical computing, and deploying data-centres to host quantum hardware. - Amazon Web Services (AWS) — Prototype chips and cat qubits

AWS revealed prototype quantum hardware (“Ocelot” chip) and research into advanced qubit encodings (like cat qubits) aiming to reduce the overhead required for error correction — a critical bottleneck for scaling. - IonQ — Strategic expansion and sensing/communications

IonQ has been making acquisitions and expanding into sensing and quantum communications markets, signalling the field is entering a more mature phase where vendors offer integrated “quantum solutions” rather than purely hardware.

Collectively, these developments show a shift from “let’s build a quantum computer” to “let’s build a quantum-ecosystem” that includes hardware, error correction, software, algorithms and use-cases.

What to Watch in the Next Few Years

If you’re keeping an eye on quantum computing, here are key metrics and milestones to monitor:

- Error rate improvement and demonstrations of logical qubits that last significantly longer than current physical ones.

- Verifiable benchmarks of quantum systems solving real-world-oriented tasks faster (or better) than classical hardware, not just synthetic tests.

- Ecosystem consolidation: hardware, software, algorithms and industry use-cases converging into commercial offerings (especially for finance, materials science, pharma).

- Hybrid quantum-classical workflows becoming mainstream as opposed to “pure quantum” ones; quantum accelerators augment classical systems.

- Post-quantum cryptography readiness: as quantum hardware advances, the cryptography world is actively adapting to resist quantum attacks.

Final Thoughts

Quantum computing holds the promise of recalibrating what’s possible in computing. Rather than incremental speed-ups, it offers qualitatively new computational paradigms. But it is not a magic wand: the engineering, error-correction, algorithmic and ecosystem challenges are very real. For businesses, researchers and tech-leaders, the opportunity is to stay prepared: understand when quantum advantage becomes practical, identify domains where it may hit first (chemistry, optimization, cryptography), and plan for a future where quantum and classical systems coexist.